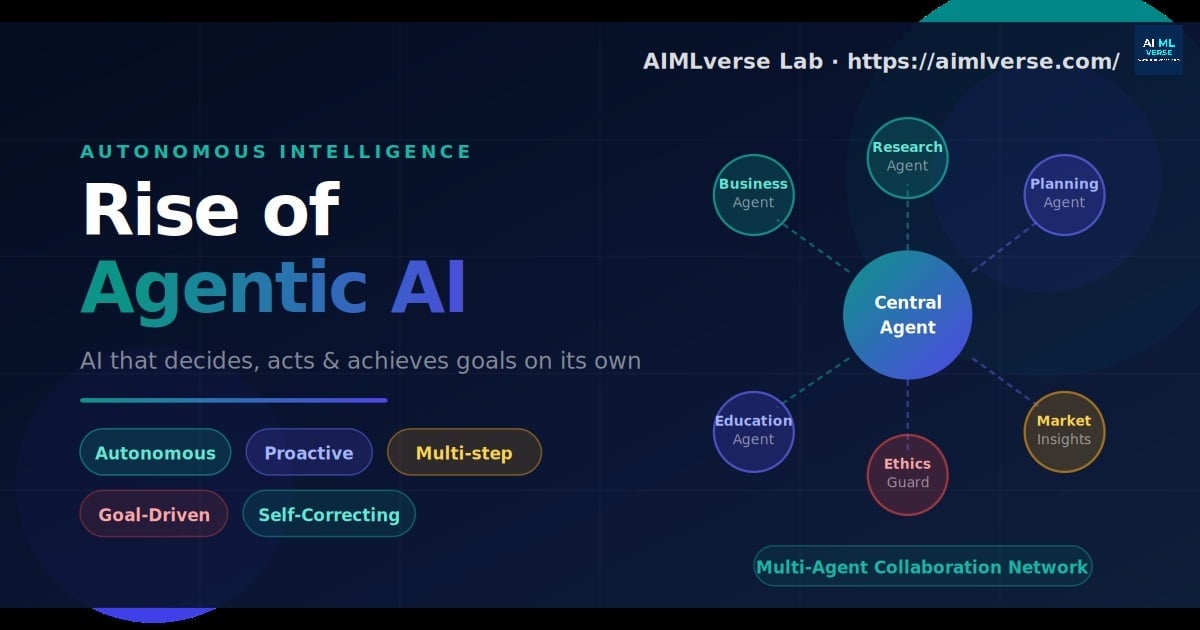

Rise of Agentic AI

AI systems no longer wait for your prompt. They plan, decide, and act autonomously. This piece unpacks what agentic AI means for every industry and why intent now matters as much as intelligence.

Read Featured Article →Explore our curated reading material, case studies, and engineering articles designed for the AI-first enterprise.

AI systems no longer wait for your prompt. They plan, decide, and act autonomously. This piece unpacks what agentic AI means for every industry and why intent now matters as much as intelligence.

Read Featured Article →

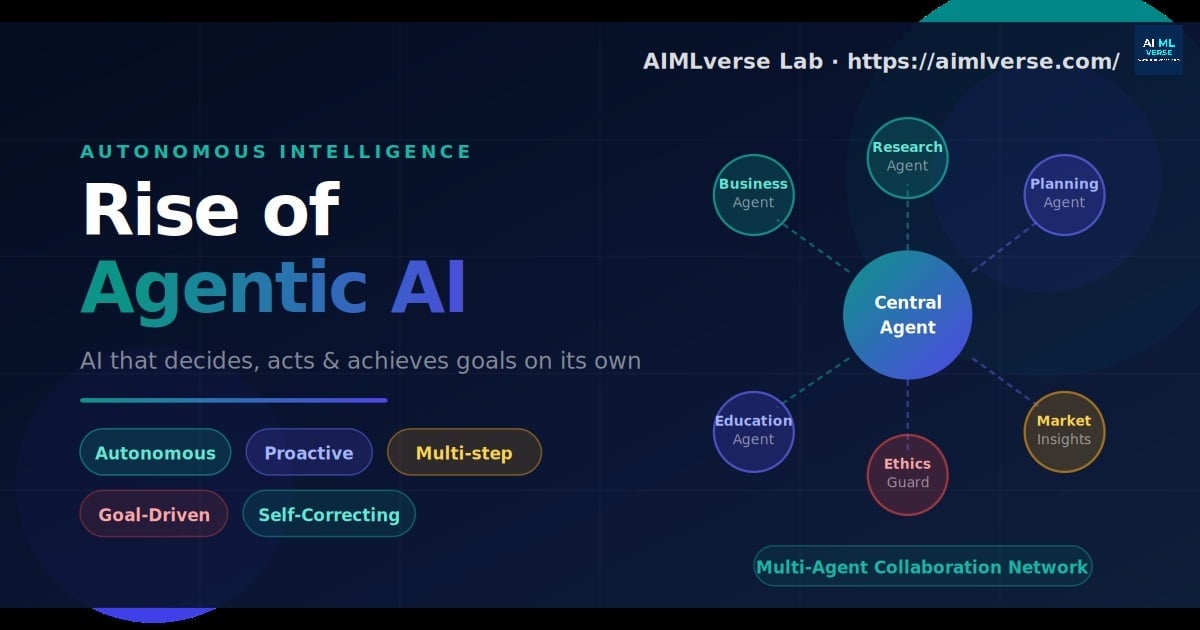

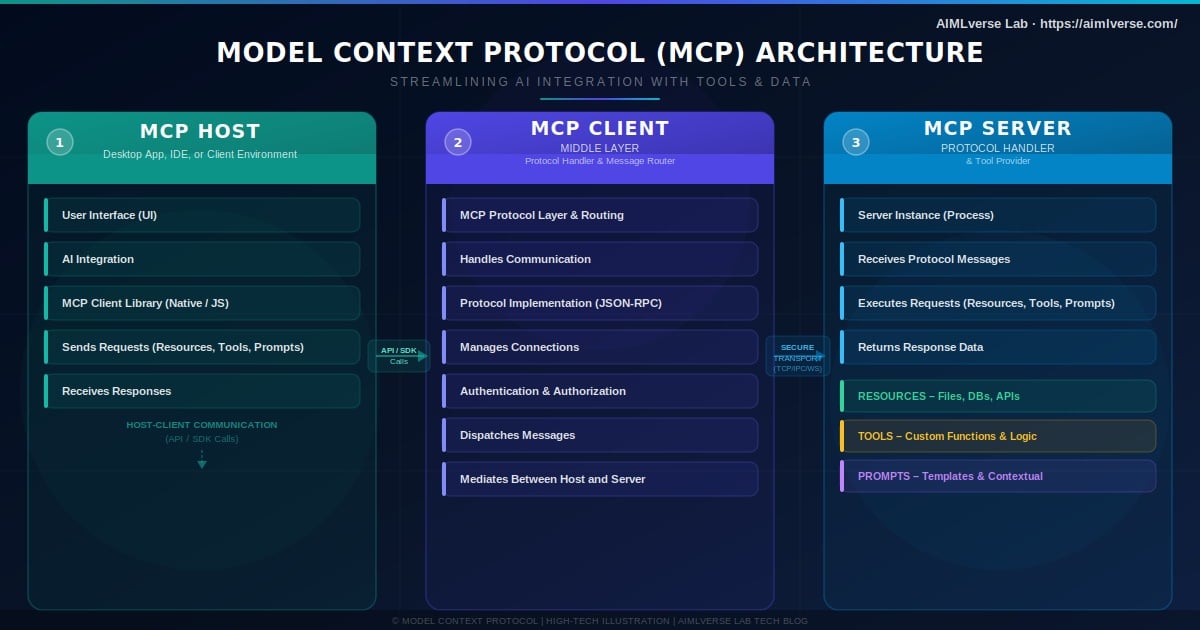

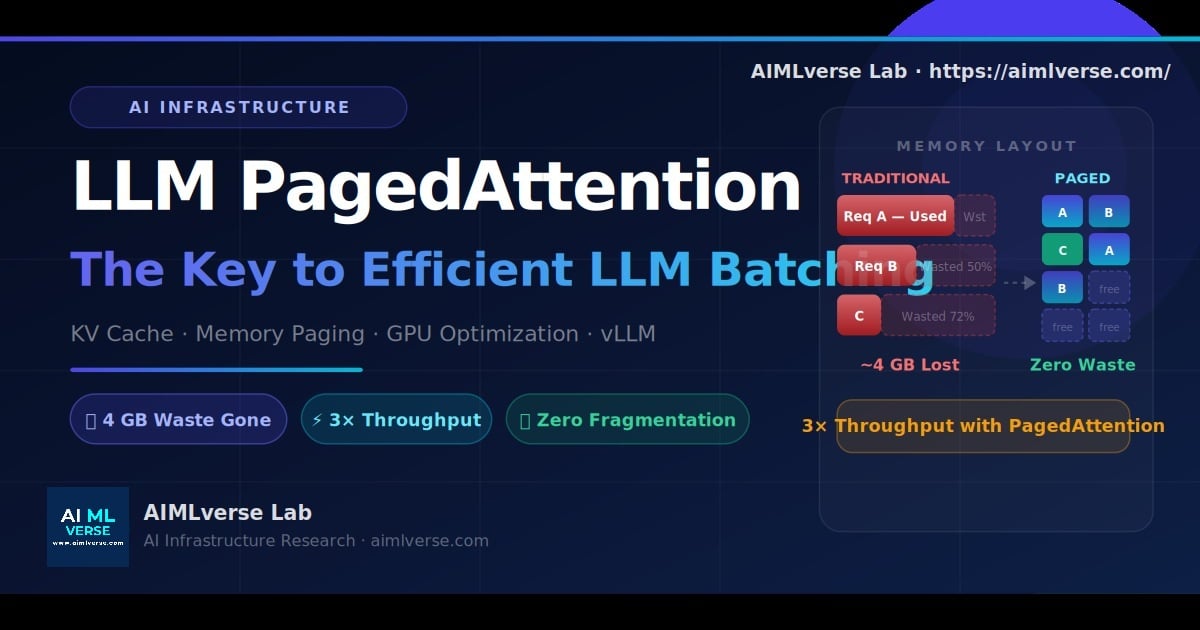

A practical breakdown of how KV caching and intelligent batching cut inference cost from $0.49 to $0.16 per million tokens, with real GPU math and deployment implications.

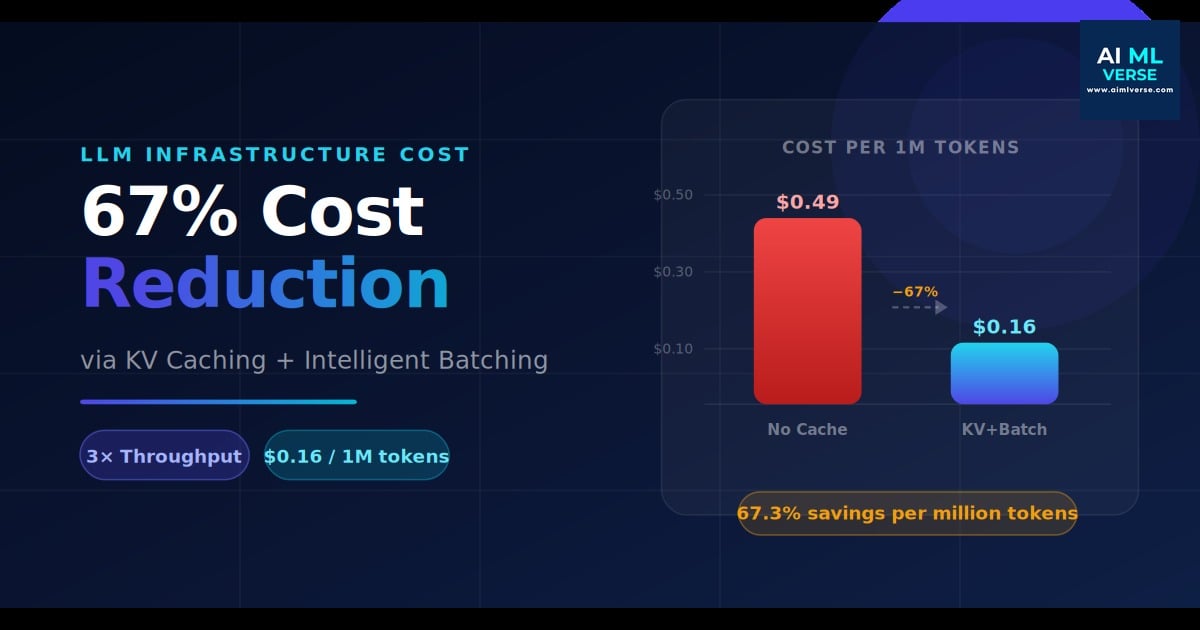

Why MCP is becoming the missing link between AI systems and external tools, APIs, and datasets.

Read Article →

See how paged memory allocation fixes KV cache fragmentation and unlocks more efficient large-scale inference.

Read Article →

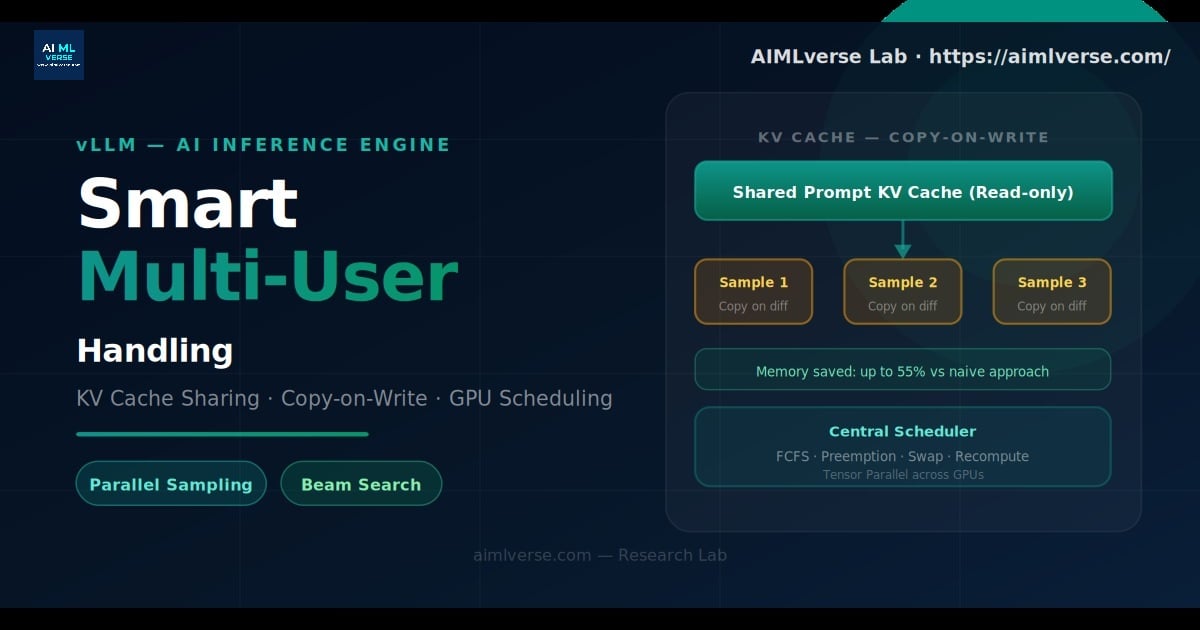

A deep dive into batching mixed requests, beam search, and copy-on-write KV cache sharing in vLLM.

Read Deep Dive →Implementing safeguards in autonomous workflows for accountability.

Coming soonWhy legacy moats are disappearing and what MSMEs can do to stay aggressively competitive in an AI-first era.

Coming soonExplore the strategies and architectures to prevent prompt injection and secure your enterprise AI models.

Coming soonCommon queries about Enterprise AI, Agentic Automation, and Custom LLM Deployment.

Agentic Automation utilizes autonomous AI agents (powered by LLMs) that can reason, plan, and execute complex workflows dynamically. Unlike traditional RPA (Robotic Process Automation) which relies on rigid, rule-based scripts, Agentic AI can adapt to exceptions, understand unstructured text, and make context-aware decisions.

The ROI of Enterprise AI is measured by calculating tangible gross margin improvements, such as reduction in manual processing hours, increased throughput in document handling (via Document AI), and faster decision-making cycles. Our AI ROI framework specifically maps engineering spend against these measurable operational KPIs.

Custom LLM platforms provide complete data privacy, allowing enterprises to securely process proprietary data without leaking it to public models. Furthermore, custom platforms enable multi-model routing to optimize for cost and latency, ensuring predictable, secure, and compliant production deployments.